Aavid documents imply that for their heat sinks convection is the primary mode of heat transfer. Despite that, other than our attempts to "force" natural convection with all of our cooling holes, without a mechanical source for air movement the primary heat rejection with our heat sinks and amps is probably

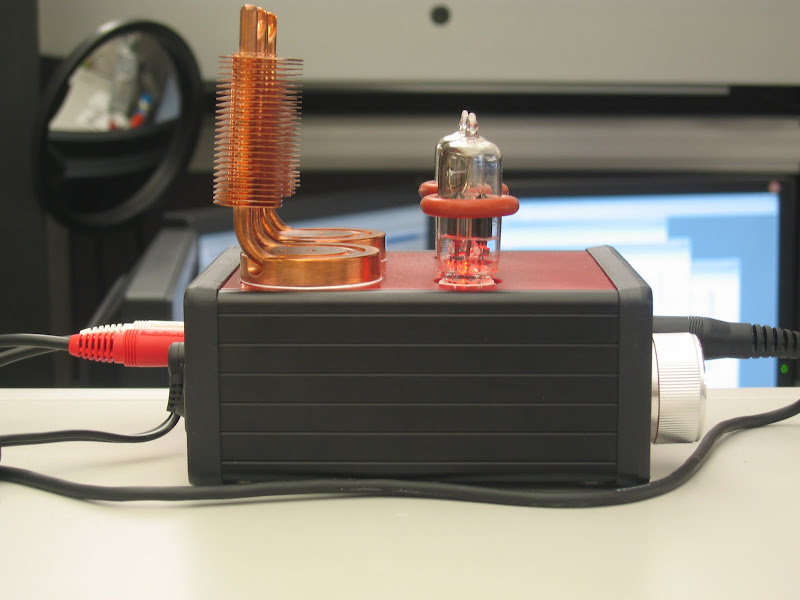

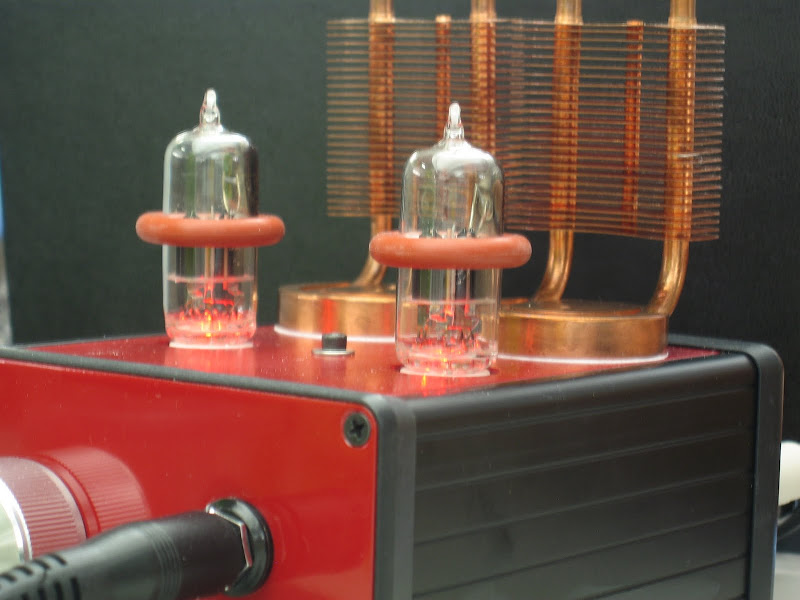

radiation. An example is the MiniMAX first prototype in my avatar - that amp has as many holes or more than any MAX I've built (especially on the bottom) - yet the pebble-vinyl surface is much different than any other case and most likely very low in radiation emissivity (black and smooth are best). In other words, the heat sinks on the PCB inside function perfectly in rejecting the heat to the case, but the case puts on the brakes by being unable to "radiate" the absorbed heat to its surroundings. The result is that amp runs hotter than any MiniMAX with the custom Beezar/Lansing anodized-aluminum case - assuming the convective cooling holes are equal in both cases.

An analogy that illustrates this is the common misconception that forcibly moving air (a powered attic fan) in a hot attic will reduce the heat load on the interior of a house. Surprisingly, this will only reduce the temperature of the air in the attic. Scientific studies have found that the primary mode of heat transfer is radiation from the underside of the roof to the joists and top surface of the ceiling below. (Sort of like heat sinks boxed up in an amplifier case.) Lowering the temperature of the air in the attic is somewhat mis-directed (ambient does have an effect on space surface temperatures), since the primary mode of heat transfer to the living space below is neither conduction nor convection.

Convection and conduction transfer heat through a linear gradient - based on the simple temperature difference, along with contact area and heat transfer coeffiicent. However, radiation transfers heat through the temperature difference to the

fourth power. That's a huge driver and the result is that any lessening of the temperature delta: surface temp of the heat sinks minus ambient air - is immediately impacted if the ambient air (thereby adjacent surfaces) is at a higher temperature.

So what am I saying? If the ambient air is higher in a room, the amp will run - dis-proportionately - much hotter. This is not just the reduction in temperature difference between the surface temperature of the heat sinks and the ambient air. Rather, the increased surface temperature of surrounding objects (by virtue of the hotter ambient air) reduces the radiation heat transfer

to the fourth power of the reduced surface temperature differences.

P.S. I'm not saying that we shouldn't cut holes in our cases to improve cooling - far from it, convection is still an extremely important mode of heat transfer. I'm only offering an explanation for why only a few degrees of elevated ambient temperatures can result in an amp running dis-proportionately hotter.