I've read the origin of DSEE is based on a computer database and a very CPU intensive DSP re sampler.

The unlimited version has was shown once in Japan and when running it was using nearly 100% of the CPU capacity.

I don't think Sony will ever release it, but from what the ex Sony engineer told me, the DSEE is based on over 2 decade of database and continuous research.

That was back in 2018 when Sony was still using the main CPU to upsample the older DSEE AI. Now in 2022, there already has been much more performance and efficiency improvements with the use of dedicated NPU inside the SOC. NPUs are orders of magnitude faster and power efficient than CPU for AI tasks. AI software development kits are able to take advantage of all the different cores inside the SOC to do AI computational tasks, so you are not only limited to just to using the NPU only, the GPU, DSP and various CPU cores can also work together to provide more performance.

Take a look at the performance chart for video object detection:

Cpu 8fps (red)

NPU 62fps (blue)

https://www.nxp.com/company/blog/us...-processor:BL-MLIN-I-MX-8M-PLUS-APP-PROCESSOR

This article was from 2018 back when Sony had released the older cpu processing DSEE AI

https://www.sony.jp/feature/products/dseehx/

If you try to make full use of AI, power consumption will inevitably increase.

Chinen: That's an unavoidable side effect of "DSEE HX," which analyzes the music scene in real time. Therefore, when installing it in products, we are pursuing low power consumption by accumulating detailed ingenuity.

Please tell me what kind of "devise" you did specifically.

Yamamoto: First of all, in the early stages of development, power consumption was ignored, cutting-edge AI technology learned from papers and the knowledge of the Sony Group were incorporated abundantly, aiming for the ultimate in sound quality. create. Of course, this cannot be built into the product as-is. At this rate, the battery would run out in just a few minutes (laughs). So, next, we thought about how we could reduce power consumption to a level that could be used in mobile devices.

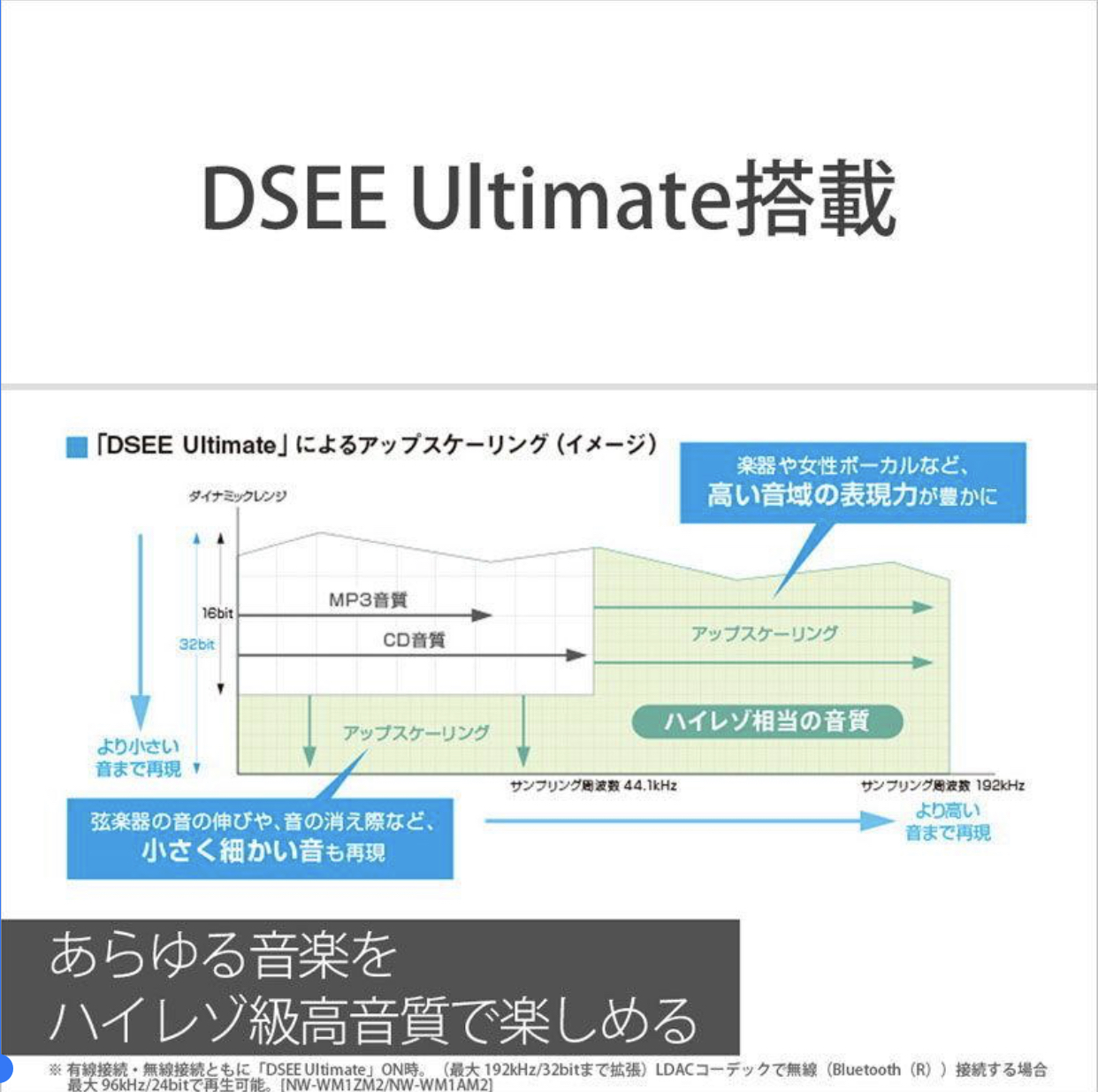

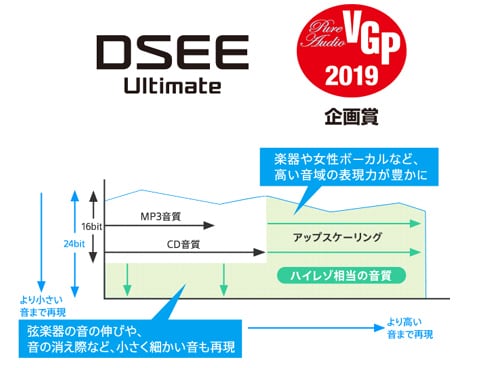

The first thing I did was the amount of information to be processed. The "ultimate version" improved performance by inputting extremely detailed information, but it turned out that even if it was limited to some extent, the effect was almost not reduced.

In addition, most of the latest papers on AI that were referenced during development dealt with images, so there was room for reduction there as well. Since images and audio have very different signal characteristics, different approaches should be taken. Realizing this, we were able to further reduce power consumption by making the DNN specialized for audio.

ofcourse this isn't as good as trying it irl, but i'm sure it's still a big help for anyone searching this thread.

ofcourse this isn't as good as trying it irl, but i'm sure it's still a big help for anyone searching this thread. . I need to be able to doubling the battery capacity or so LOL

. I need to be able to doubling the battery capacity or so LOL .

.