A Quick Note: Bits n’ Bytes

When you are dealing with digital audio, you are dealing with Bits.

By convention, bus and network speeds are denoted either in bit/s (bits per second) or byte/s (bytes per second). In general, parallel interfaces are quoted in byte/s and serial in bit/s. On devices like modems, bytes may be more than 8 bits long because they may be individually padded out with additional start and stop bits; the figures below will reflect this. Where channels use line codes (such as Ethernet, Serial ATA and PCI Express), quoted speeds are for the decoded signal.

Where two values are listed, the first value is the downstream rate and the second value is the upstream rate.

All quoted figures are in metric decimal units, where:

I.1 bit = Base Unit

II.1 byte = 8 bits

III.1 kbit = 1,000 bits

IV.1 Mbit = 1,000,000 bits

V.1 Gbit = 1,000,000,000 bits

VI.1 kB = 1,000 bytes

VII.1 MB = 1,000,000 bytes

VIII.1 GB = 1,000,000,000 bytes

IX.1 TB = 1,000,000,000,000 bytes

Digital Audio Basics

Audio signals created by digital synthesis originate entirely in the digital domain, in which case analog to digital conversion does not take place.

After being sampled with the ADC, the digital signal may then be altered in a process which is called digital signal processing where it may be filtered or have effects applied.

The digital audio signal may then be stored or transmitted. Digital audio storage can be on a CD, a digital audio player, a hard drive, USB flash drive, CompactFlash, or any other digital data storage device. Audio data compression techniques — such as MP3, Advanced Audio Coding, Ogg Vorbis, or FLAC — are commonly employed to reduce the file size. Digital audio can be streamed to other devices.

The last step for digital audio is to be converted back to an analog signal with a digital-to-analog converter (DAC). Like ADCs, DACs run at a specific sampling rate and bit resolution but through the processes of oversampling, upsampling, and downsampling, this sampling rate may not be the same as the initial sampling rate.

Sampling theorem

The Nyquist–Shannon sampling theorem states that perfect reconstruction of a signal is possible when the sampling frequency is greater than twice the maximum frequency of the signal being sampled,[4] or equivalently, when the Nyquist frequency (half the sample rate) exceeds the highest frequency of the signal being sampled. If lower sampling rates are used, the original signal's information may not be completely recoverable from the sampled signal.

For example, if a signal has an upper band limit of 100 Hz, a sampling frequency greater than 200 Hz will avoid aliasing and allow theoretically perfect reconstruction.

Oversampling

In some cases, it is desirable to have a sampling frequency more than twice the desired system bandwidth so that a digital filter can be used in exchange for a weaker analog anti-aliasing filter. This process is known as oversampling.

Undersampling

Conversely, one may sample below the Nyquist rate. For a baseband signal (one that has components from 0 to the Nyquist rate), this introduces aliasing, but for a passband signal (one that does not have low frequency components), there are no low frequency signals for the aliases of high frequency signals to collide with, and thus one can sample a high frequency (but narrow bandwidth) signal at a much lower sample rate than the Nyquist rate.

While the goal of both analogue and digital systems is to reproduce audio perfectly, there are several obstacles to achieving this, including:

Analog noise floor in the capturing circuitry has inherent capacitance and inductance that limits the bandwidth of the system, and resistance that limits the amplitude.

Digital quantization noise in the capturing circuitry, and sampling rate limits the bandwidth and its bit resolution limits the dynamic range (resolution of amplitude creation).

In order to achieve better fidelity, higher quality components are required.

A digital audio signal starts with an analog-to-digital converter (ADC) that converts an analog signal to a digital signal. The ADC runs at a sampling rate and converts at a known bit resolution. For example, CD audio has a sampling rate of 44.1 kHz (44,100 samples per second) and 16-bit resolution for each channel (stereo).

If the analog signal is not already band-limited then an anti-aliasing filter is necessary before conversion, to prevent aliasing in the digital signal. (Aliasing occurs when frequencies above the Nyquist frequency have not been band limited, and instead appear as audible artifacts in the lower frequencies).

SIDE BAR A

Frequencies and descriptions

Frequency (Hz) /Octave/Description

16 to 32/1st/The human threshold of feeling, and the lowest pedal notes of a pipe organ.

32 to 512/2nd to 5th/Rhythm frequencies, where the lower and upper bass notes lie.

512 to 2048/6th to 7th/Defines human speech intelligibility, gives a horn-like or tinny quality to sound.

2048 to 8192/8th to 9th/Gives presence to speech, where labial and fricative sounds lie.

8192 to 16384/10th/Brilliance, the sounds of bells and the ringing of cymbals. In speech, the sound of the letter "S" (8000-11000 Hz)

Digital Reproduction: The Big Issue

The Jitter Bug: Your Digital Enemy

Jitter is an often-debated subject on the web audiophile forums. Like cables, there tend to be believers and non-believers. The goal of this treatise is to educate and share the current state of my jitter understanding.

Most audiophiles do not even realize that they have jitter until it is reduced. I liken it to looking through a window made of really old glass, when glass had ripples and bubbles in it. There is a spreading and distortion that widens and defocuses some images and creates an overall mild distortion. It is still obvious what is on the other side of the window, but it is not coming through with crystal clarity. Reducing (you will notice that I do not say "removing") jitter is like replacing the glass with a clean, flat piece of glazing. Things are now visible in great detail and with a "vividness" that was not there with the rippling glass. Jitter can be blamed for much of the "fatigue" that results from listening to some digital playback systems, just like it is fatiguing peering through rippled glass for any length of time.

Some Annoying History

Jitter has been with us since the inception of the CD format by Sony and Philips in 1982. It is a pervasive problem with all digital audio. It has prevented digital audio, both CD's and computer-driven-audio from competing with good vinyl and tape for decades. It is only recently that manufacturers have become aware of the problem and developed improved chips and systems to deal with jitter.

What is Jitter?

Technically, playback jitter is the inaccuracy in the timing of the "ticks" of the clock that transfers the samples of digital data into the D/A converter chip. To move data in a digital system from one point to another, it is usually clocked. In order for the D/A conversion to work, a new data word must be presented to the D/A converter periodically, or at a fixed frequency. The system clock or clocks do this. In an ideal world, each transfer of data to the D/A on a clock tick should occur at a precise point in time. If some data transfers occur a tiny bit early and others occur a tiny bit late, this is jitter. It is kind of like a timepiece that ticks away the seconds, where the duration of each second is not exactly a second, but over a large number of seconds, the timepiece is still telling accurate time. The duration of some second intervals is slightly short and other intervals are slightly long, but the average of all of them still gives accurate time. Likewise, when digital audio is played-back, the clock "ticks" that present each new data word to the D/A converter can have shorter and longer intervals from one tick to the next. The effect of this jitter on the D/A conversion and the analog waveform is frequency modulation, the same type of modulation that is used for FM radio. The difference is that with FM radio, the carrier frequency is fixed and the jitter is the music signal that is modulating the frequency of the carrier wave. With digital audio, the carrier is the music and the jitter is the modulation. This makes it a much more complex signal than even FM radio. The jitter associated with digital streaming audio is usually a mix of non-correlated and correlated jitter, correlated being that jitter that is somehow related to the music data or waveform and uncorrelated usually being random jitter. Jitter has both an amplitude and a frequency component. We will later discuss which of these I believe is more important.

Difference between Audio Streaming and other data transfers

Digital audio streaming is a "real-time" process, meaning that the actual timing of the transfer of each data bit from the source to the D/A converter is important and must be as precise as possible. Data transfers to disk or a printer are not real-time because there is no urgency for the data to arrive at the printer or disk to prevent errors from happening. The data arrives whenever it does and then the device does its job with the data, either writing it to the disk or storing it in a print buffer. If the data does not arrive in time to be written on a particular sector of a disk, the hardware just waits for the disk to rotate again. Streaming audio data on the other hand must arrive at precise time intervals in order that the D/A device create an accurate representation of the original recording. If it does not "keep-up" the pace, then dropouts will occur and the D/A converter will fall out of sync. The clock that moves the data into the D/A cannot be missing any "ticks" and each tick must be precisely placed in time. The audio data transfer must include both 1) accurate data and 2) accurate timing, whereas non-real-time transfers only require accurate data.

Recording Jitter

The recording of digital data is essentially a periodic sampling of a voltage (the voltage being the music waveform created from an instrument or microphone), where the period in theory is very precise. These captured sample voltages are then converted to digital data words and stored in memory or on recording media. There is no timing information actually stored in the data samples, but the timing is implicit in the samples themselves. There is however control information, which specifies the sample-frequency that should be used for playback, and other info such as pre-emphasis and word-length. If the timing that captured these data samples included jitter, then this is a characteristic of the samples and cannot be realistically eliminated during playback. This recording jitter is always there in the music file. Playback jitter is another matter however.

Playback Jitter Contributions

Playback jitter originates from a large number of contributors, which are usually additive. These range from the master clock, which has its own jitter, to logic devices, to mechanical systems for spinning a CD. One digital cable can even add more jitter than another. Each contributor adds more jitter to the signal as it makes its way to the D/A converter. This summation of this jitter is the system jitter.

Here is a lengthy, but probably not complete list of jitter contributors, including how each of these can or might add jitter to a digital audio system:

1. Master Clock Jitter

This is the source clock for all of the data streaming timing, usually a quartz-crystal-controlled oscillator at a very high frequency. It may be a 11.2896MHz clock in a CD player for data sampled at 44.1kHz, or in computer audio systems, a clock that is software generated from an even higher frequency CPU clock. The jitter of these clocks is intrinsic to the crystal device, but also depends on the design of the oscillator circuit. In the case of the master clock being software generated, the jitter can also be dependent on the program code.

2. Servo-system/rotational jitter

This is the system in the CD player/Transport that determines the spin-rate of the CD. It is an electromechanical system. Even though most modern CD players have buffering of the data to create some tolerance to this jitter, there is usually a PLL (Phase-locked-loop) involved, which is usually still somewhat susceptible to jitter. For newer players that completely buffer the data at high-speed from a CDROM reader to a memory buffer, this jitter is not an issue.

3. Jitter from the pits on a CD

These are the pits in the CD media that represent the recorded data. Variation in the spacing of these pits result in jitter when reading the data. Commercially CD's created from a glass-master generally have more variation in the locations of the pits than a CD-R written at 1X speed on a good CD-R writer. Even though most modern CD players have buffering of the data to create some tolerance to this jitter, there is usually a PLL (Phase-locked-loop) involved, which is still somewhat susceptible to jitter. To determine if your player is susceptible, it is a simple experiment to re-write or "clone" a CD and then listen for playback differences from the commercial version. For newer players that completely buffer the data at high-speed from a CDROM reader to a memory buffer, this jitter is not an issue.

4. S/PDIF conversion

The S/PDIF interface was created by Sony/Philips to simplify the interconnect between digital audio devices. The deficiency in this interface is that it embeds the serial clock in the serial data-stream in order to achieve one-signal cabling. A superior interface would have included both a clock and a data signal, but we don't have this, so we live with S/PDIF. The S/PDIF interface must encode the data and clock into a single signal and then at the destination recover the clock(s) from the data-stream. The process of recovering the clocks can introduce new jitter since there is usually a PLL involved.

5. Logic buffering

The digital audio data must make its way through the system over wires/traces and sometimes through buffers, such as the buffer to drive the S/PDIF cable. Each of these buffers has finite reaction times and imprecise detection of changing signal levels. What this means is that even though the signal may not have much jitter coming into the buffer, it may exit with additional jitter. This jitter is a result of the speed of the device, thermal effects on the silicon die, power delivery on the die and even transmission-line effects.

6. Power subsystem

The DC power applied to each of the devices that must process or transmit the digital audio signal is critical. If this power varies in voltage, the devices will react differently to the applied digital signals. Power "noise" as it is referred to is probably one of the largest contributors to jitter. Voltage changes or "voltage droop" can happen anywhere on a circuit board, power cabling, or even on the silicon itself. Changes in power voltage will change the speed and reaction times of digital logic that is transmitting the digital signals resulting in jitter.

7. Toslink optical conversion

Optical conversion adds another layer of buffering on both the transmitting and receiving ends of the S/PDIF interface. This additional layer in itself adds jitter, regardless of whether it is optical or not because of (5). However due to its complexity, the optical interface adds more jitter than a simple logic buffer. For that reason, it has higher jitter/lower performance than a well-designed S/PDIF coax interface.

8. Digital Cables

Cables don't actively add jitter to the signal, however they can slow the signal transitions or "edges". When the edges are slowed, the receiver or buffer at the cable destination is less likely to detect the transition at the correct time with certainty, which results in jitter.

9. Transmission-line effects

TL effects are usually more of a problem on the S/PDIF coaxial cable, but may also be present on long traces on circuit boards. These occur because the signal transition is very fast and as a result, reflections occur on the wire or trace. The signal reflections from an earlier signal transition can return to the destination and "push" or displace a later signal edge, causing jitter. TL lines must be properly terminated and impedance-matched in order to minimize reflections and the potential resulting jitter. There are steps that can be taken to minimize this effect, such as using a longer S/PDIF cable. See:

spdif

10. Printed circuit board effects

There are at least two effects on a circuit board that can cause jitter, including signal crosstalk and ground-bounce. Crosstalk occurs when traces with high-speed signals are spaced closely. One signal induces voltage on the other signal. It is obvious how this can add to jitter. Ground-bounce occurs when the signal return current see a high-impedance on the circuit board due to ground-plane splits or long return paths. This creates a voltage drop in the ground-plane or return path. This voltage drop causes the signal to shift in voltage, which can result in jitter.

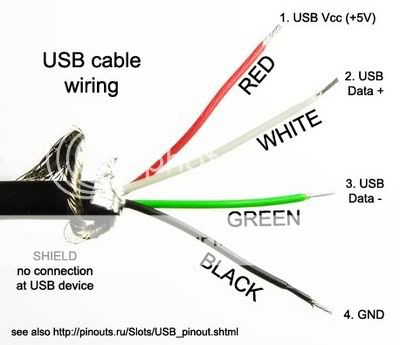

Jitter and USB

There is much misinformation on the forums about USB for audio streaming. USB is a fairly jittery interface on it's own. Some of the integrated circuit devices that were created to provide easy plug-and-play USB audio interfaces don't do enough to reduce USB jitter IMO. Many manufacturers adopted these plug-and-play devices to quickly and cheaply add USB to their DAC products. Unfortunately the less-than-stellar reviews that ensued had some of these manufacturers regretting these decisions. This gave USB a bad name in many circles.

Fortunately, there are other low-jitter USB interfaces available now that not only support 24/96, they even compete with the best CD playback devices. In 2009, I believe we will see USB support for 24/192 and even lower-jitter interfaces. USB is IMO the wired audio interface that will be most prevalent in the near future.

Jitter and Networked audio

Networked audio (Ethernet), both wired and WiFi is a unique case. Because the data is transmitted in packets with flow-control, re-try for errors and buffering at the end-point device, it is not as much of a real-time transfer as USB, S/PDIF or Firewire. The computer transmitting the data packets must still keep-up" the pace to prevent dropouts from occurring, but the real-time nature of the transfer is looser. Unlike with other protocols, there can be dead-times when no data is being transferred. Networking also avoids the use of the audio stack of the computer audio system since it treats all data essentially the same. This avoids kmixer on XP systems and the audio stacks on Mac and PC Vista. Because of the packet-transfer protocol of Ethernet and data buffering at the end-point, the jitter of the clock in the computer is a non-issue. The only clock that is important is the one in the end-point device. Examples of end-point devices are: Squeezebox, Duet and Sonos. This would seem to be the ideal situation, which it certainly is. The only problem that can occur is overloading the network with traffic or WiFi interference, which may cause occasional dropouts. The problem for audiophiles is that the majority of these end-point devices were designed with high-volume manufacturing and low-cost as requirements, with performance taking a lower priority. As a result, the jitter from these devices is higher than it could be. It should be the lowest of all the audio source devices available.

Jitter and Re-Clockers

There are a number of re-clockers available, some older and some newer technologies. There are three main types of re-clockers:

1) A true re-clocker that uses a free-running oscillator and stores data in a buffer

2) Re-clocker that uses a series of PLLs (Phase-Locked-Loops) to reduce jitter

3) Re-clocker that uses ASRC (Asynchronous Sample-Rate Conversion) to reduce jitter (also a PLL)

You will notice that I always use the terms: "reduce jitter" or "extremely low jitter", even with my own products. This is because it is impossible to completely eliminate jitter, contrary to the claims of some manufacturers.

The true re-clocker (1) can deliver the lowest jitter of the three types because it is not influenced by any outside signals.

A series of PLLs (2) will reduce jitter, but PLLs are affected by the jitter in the input signal to some extent. The more PLLs that are cascaded and the lower the filtering of the PLL loop filter, the better the jitter reduction will be. Some high-end DACs use this technique.

The ASRC up-sampler of (3) is somewhat sensitive to incoming jitter and has the disadvantage of changing the data by up-sampling it. If you don't like the sound of that particular hardware up-sampler, there is nothing you can do about it. Examples of this are in many modern DACs.

Re-clockers of the same type are not all equal either. The jitter of the master-clock in the re-clocker can vary. The design of the circuits, the power sub-system and circuit-board layout has a huge impact on the performance of a re-clocker. In order to achieve extremely low jitter levels, all of these disciplines must be mastered and the implementation must be flawless.

Low-jitter clock technology has improved dramatically in the last 2 years, so newer re-clockers will usually take advantage of this.

Jitter Correlation to Audibility

The correlation of jitter measurements to audibility is in its infancy IME. The problems start with the characterization of jitter. Generally, manufacturers of crystal oscillators specify jitter in terms of RMS jitter amplitude. The problem is that they often neglect to state that this is specified at 10kHz and higher. There is also no spectral or frequency content information specified. This makes it very difficult to tell which oscillators will have audible jitter or objectionable jitter.

For instance, Empirical Audio uses two oscillators that are both specified at 2psec RMS jitter. The two oscillators sound radically different to me when used in a re-clocker in a resolving audio system. This leads me to believe that the spectrum or frequency content of the jitter is as important or maybe even more important than the amplitude. I also believe that correlated jitter or jitter with a relationship to the data pattern or audio signal is also more audible than random jitter. This seems to be the consensus in a number of AES papers.

Studies by the AES (analysis, not human testing) conclude that these are the thresholds of audibility:

[1] 120psec P-P jitter audibility threshold for 16-bit DAC and 8psec P-P jitter audibility threshold for 20-bit DAC

[2] 20psec P-P of data-correlated jitter audibility threshold at certain frequencies and "A simple model of jitter error audibility has shown that white jitter noise of up to 180psec P-P can be tolerated in a DAC, but that even lower levels of sinusoidal jitter may be audible"

Since many measurements (that don't specify any particular frequency content) performed by Stereophile in

[3] are above 150psec or close to this, I do not believe that we have reached the limits of jitter audibility yet. I suspect that P-P jitter needs to be almost an order of magnitude smaller, or around 15psec to be inaudible in all systems.

The ability of the human ear/brain, particularly the trained ear, to hear minute differences, particularly data-correlated jitter, is grossly underestimated. The live listening AB/X studies published to date (that I have read) are inconclusive IMO. The systems used were not resolving enough IMO, the recording quality was not good enough and the test signals were random and not correlated and therefore inadequate to properly test for jitter audibility. I tend to believe the numbers arrived at by the AES analytical studies rather than the A/BX listening tests.

There are a series of double-blind tests being performed by many audiophiles using synthetic jitter tracks provided by HDTracks. These may shed some new light on true audibility. Again, the effectiveness of these experiments is only as good as the quality of the tracks provided, the jitter that was synthesized and the audio systems that are used for testing. The results from the first set of jitter tracks shows just how unresolving most audiophile systems are. There are couple that could pick out the majority of the tracks by increasing jitter, but the majority could not hear any difference between the tracks, even though the jitter ranged from 0 ns to 1000ns I believe.

Another interesting thing about audibility of jitter is its ability to mask other sibilance in a system. Sometimes, when the jitter is reduced in a system, other component sibilance is now obvious and even more objectionable than the original jitter was. Removing the jitter is the right thing to do however, and then replace the objectionable component. The end result will be much more enjoyable.

Jitter can even be euphonic in nature if it has the right frequency content. Some audiophiles like the effect of even-order harmonics in tubes, and like tubes, jitter distortion can in some systems "smooth" vocals. Again, the right thing to do is reduce the jitter and replace the objectionable components. It is fairly easy to become convinced that reducing jitter is not necessarily a positive step, however this is definitely going down the garden path and will ultimately limit your achievement of audio nirvana.

Sibilance in a system caused by preamp, amps and other components and cables can also be so high that changes in jitter are not very audible. This is why there is such contention on the web forums about jitter and its importance. What matters in the end is if you are happy with the sound of your system, and whether or not you can hear this distortion.

For Jitter FAQ, Please see Appendix V