upstateguy

Headphoneus Supremus

- Joined

- Dec 20, 2004

- Posts

- 4,085

- Likes

- 182

Wow is that false. Even 24/44 sounds better than 16/44, given a proper transfer from tape. See The Cars and The Beatles for 24/44 masters.

It's like saying no one ever can see more than 640x480 resolution, because you can't see it on your singular screen. Stop it. Stop it. Stop it. 1984 called, it wants it's argument back.

Let's cut to the chase --- stereo PCM file -- effective bandwidth -- are you claiming you can hear a difference between 256k and 1400k, but you can't hear a difference between 1400k and 2800k, or 1400k and 5800k?

Listen to reverb trails. Listen to air in the room. Listen for how many voices (sounds) can be presented at once. Vocal choruses, choirs. Listen to room shape, accuracy of delays, timing cues, width, depth, even timbre of instruments -- all of that improves with higher bitrate.

Yes bad gear in the signal chain can mask it. If you play through laptop-level circuits then 16/44 might be all you need. But as soon as you use a real DAC with good analog, it's easy to hear beyond that. Especially if you are projecting the sound to a large room.

Btw -- my mastering engineer said he takes everything to 24/192 immediately upon delivery, regardless of resolution it is delivered as, and has for over 15 years now. He then applies his processes (mainly EQ), masters the material, and then down samples and dithers to whatever resolution / format is required by the client.

His reason? "There's more there there", and what some call headroom he calls air, and it's critical to the sound of the music (assuming it's not modern pop/rock squashed to all high hell).

When you create music from scratch in a studio it's very easy to hear these differences. Perhaps on the train with an iPhone it's not as easy, but the differences are still critical.

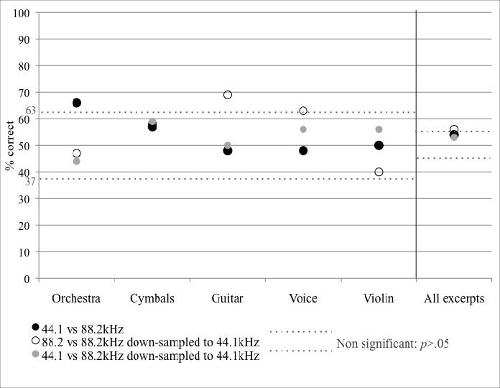

Take a 24-bit file. Convert it to lossless 16-bit / 44.1 kHz. Sounds exactly the same. Always.

The only reason some hi-res files sound different is because they came from a different master. Isolate the variables by converting the files yourself (to ensure you are comparing two different resolutions instead of merely two different masters) and those differences disappear.

As has been stated countless times elsewhere, 24-bit just adds more dynamic range, but 16-bit can already handle the dynamic range of all recordings. And frequencies above roughly 20 kHz are inaudible to humans. These things have no possible benefit in terms of audio playback.

If you would like to prove otherwise, simply publish a proper ABX test. Many people here can walk you through how to do so.

Although I've used the same technique to shoot down an argument in the past, I've come to dislike it.

Here's why......

So, OK, now you've made a claim, "Take a 24-bit file. Convert it to lossless 16-bit / 44.1 kHz. Sounds exactly the same. Always."

Sounds exactly the same. Always. Is that so???

I look forward to seeing you back up your claim using your own standard of proof, by "simply publishing a proper ABX test".

Please don't link me to someone else's work.

I would like to review your personal results and have a look at the files you created for the purposes of your test.