Yet even an iPhone 12 could do 11 trillion operations per second in real-time. Billions of filter coefficients is ridiculous when a few thousand is already beyond audibility and 192bit accuracy is just as ridiculous when the input file is 16bit or at most 24bit. But more is always better in the audiophile world, even when it’s actually worse, because audiophiles are willing to pay for the privilege of worse (or no better).

A single operation cannot be directly equated to a filter coefficient. That isn't how things work.

When you process a track like this, you are processing the ENTIRE track at once, with those hundreds of millions of filter coefficients, hence why PGGB will utilise in some instances a couple hundred gigabytes of RAM if you have it available. It isn't as simple as doing one sample and then moving on to the next.

You can do block processing, but that then limits how many coefficients you can use and has other limitations.

In regards to the 192 bit precision, this is not about outputting a 192bit file, but doing the maths itself with 192 bit precision, which surprisingly enough CAN affect the value of 24 bit samples. The bit depth of the file and the precision of the maths are separate and I'm sure you're aware of this already. For similar reasons as to why with a 16 bit ADC you can still look lower than -96dB with FFT gain etc.

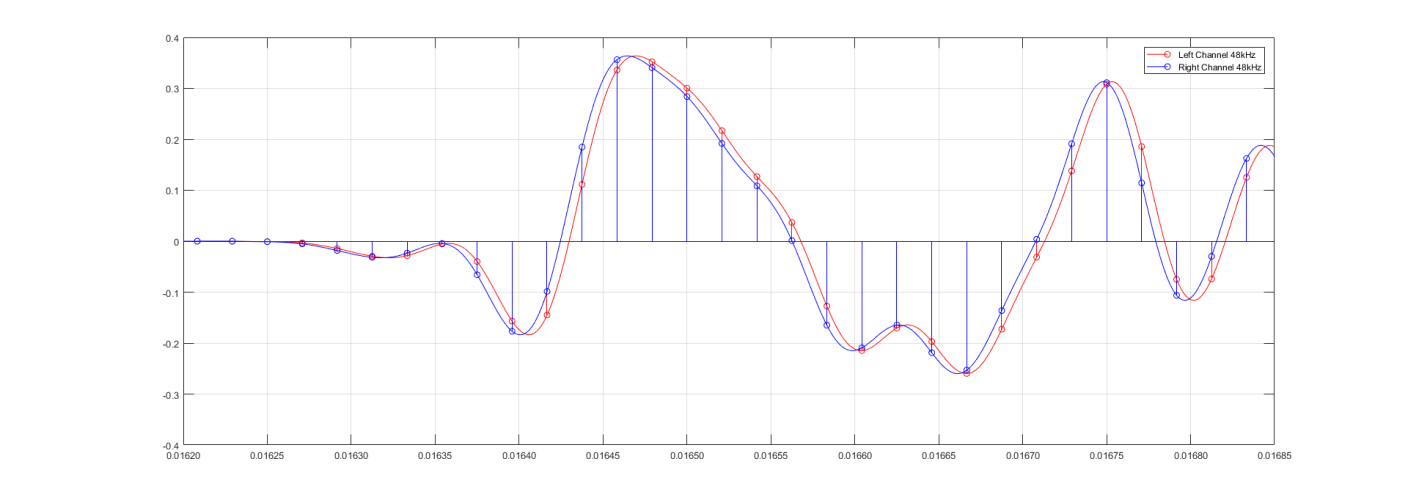

For example here's a test showing some small signal content at 44.1khz upsampled to 705.6khz using 64 bit precision and output to a 24 bit file.

You can see the noise shaper is effective to about -380dB here. So, very good (64 bit precision is already a lot of course). But to demonstrate that regardless of the fact that we are only outputting to a 24 bit file we can still affect the content:

256 bit precision, still outputting to 24 bit file, and look at the result:

Now we have a noise shaper accurate to around -600dB. And we will be able to provide more accurate phase and amplitude accuracy for the reconstructed content. Whether that is audible/useful is an entirely separate debate and this isn't the thread to have that discussion (nor do I really wish to have that debate as it's pretty clear where we both stand and that's unlikely to change for the moment).

My point is simply that the higher bit precision is not about the file output, but about how the maths itself is done and how that then affects the output of even a 24 bit file.

Lastly in regards to the mentions of PGGB using MatLab and 'being unoptimised', the dev has added a post to the site discussing this. The TLDR is that the core library is fully optimized C++ which uses assembly level optimization, Matlab is a shell that provides a user interface and calls the C++ PGGB library. The reason PGGB takes so long to process a file is simply because processing tracks with millions of samples with hundreds of millions of filter coefficients at high levels of mathematical precision is just a very compute intensive task and there isn't a way around that.